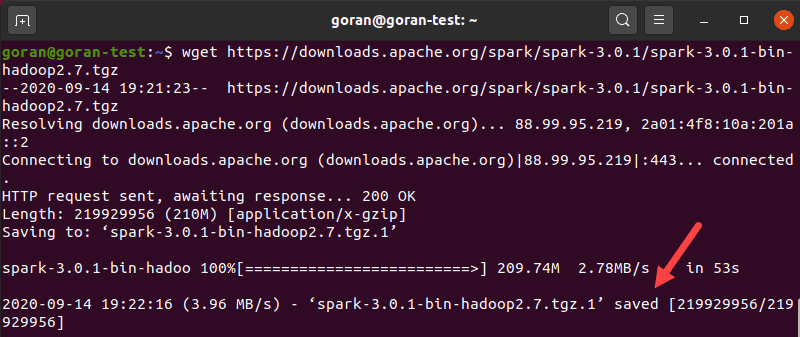

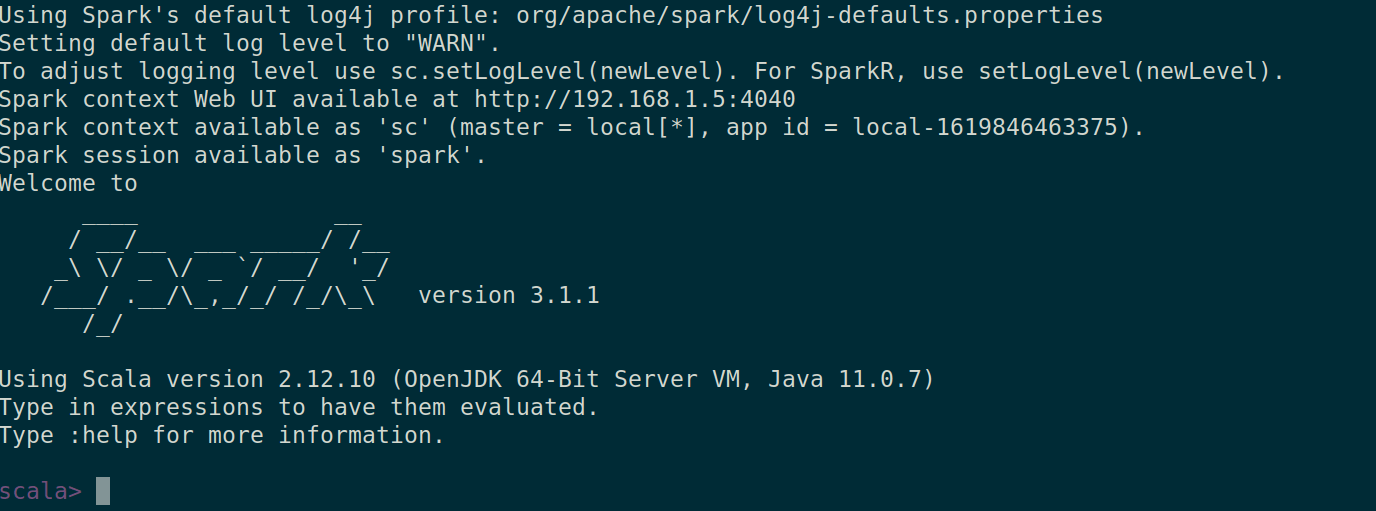

firewall-cmd -permanent -zone=public -add-port=6066/tcpįirewall-cmd -permanent -zone=public -add-port=7077/tcpįirewall-cmd -permanent -zone=public -add-port=8080-8081/tcpĪpache Spark will be available on HTTP port 7077 by default. For testing we can run master and slave daemons on the same machine. executing the start script on each node, or simply using the available launch scripts. The standalone Spark cluster can be started manually i.e. bash_profileĮcho 'export PATH=$PATH:$SPARK_HOME/bin' >. bash_profileĮcho 'export SPARK_HOME=$HOME/spark-2.2.1-bin-hadoop2.6' >. Setup some Environment variables before you start spark: echo 'export PATH=$PATH:/usr/lib/scala/bin' >. Install Apache Spark using the following command: wget Įxport SPARK_HOME=$HOME/spark-2.2.1-bin-hadoop2.7 Once installed, check the scala version: scala -version Sudo ln -s /usr/lib/scala-2.10.1 /usr/lib/scala

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed